Traits of AI-Native People

Everyone's trying to become AI-native. They're trying to get their teams to become AI-native. Or they're looking to hire AI-native people.

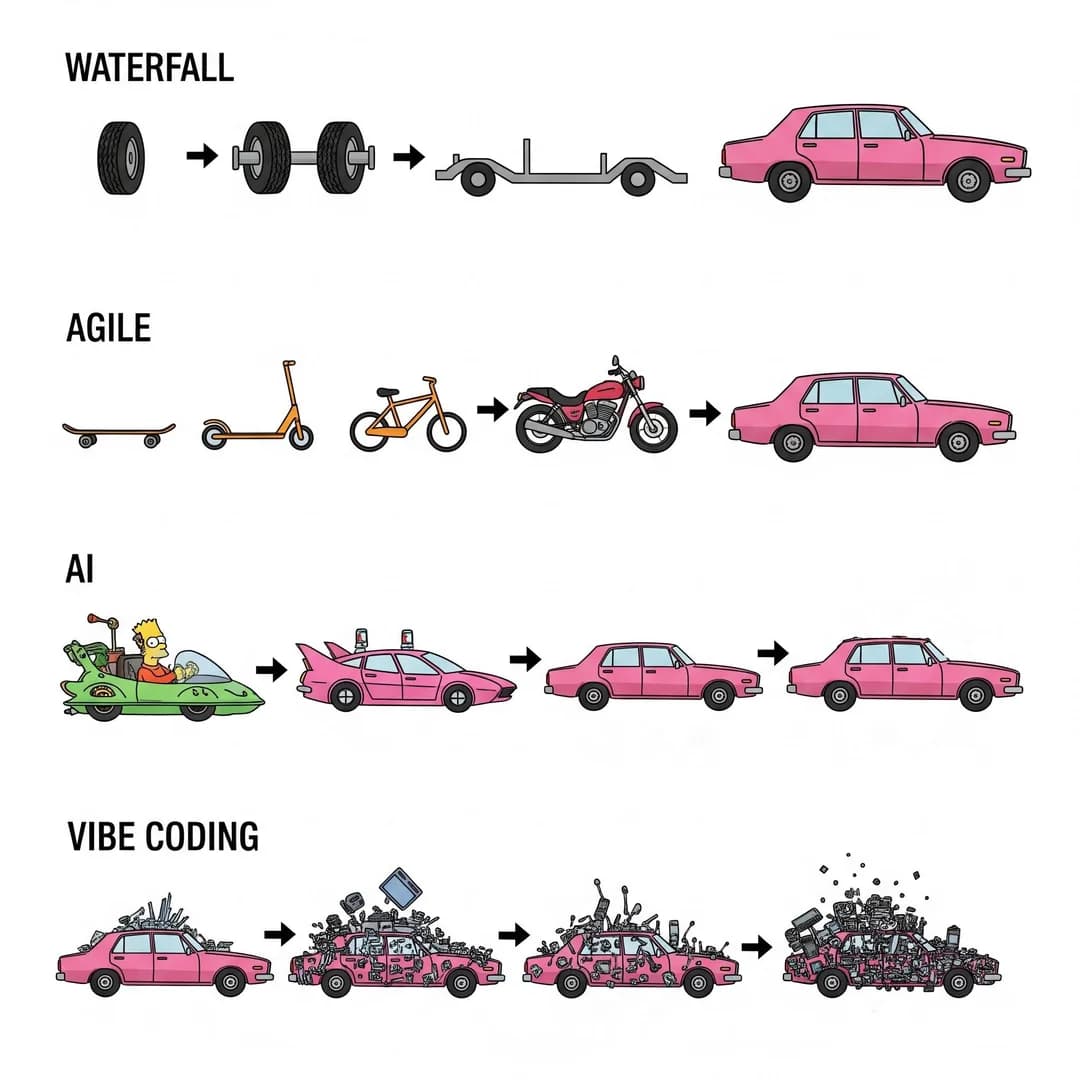

But what does AI-native even mean, and how do you measure something like that? A common response is to pin it on the number of tokens someone uses. More tokens, more AI-native. You've likely seen it on LinkedIn: "We blew through a trillion tokens, and the company-that-profits-from-it gave us a shiny plaque." Or that company dashboard that ranks employees by token usage. It's bogus.

I was talking to someone recently who described AI less as a compounding force and more as a magnifying one, and that feels right. Bad engineers don't become good because they use AI; they just produce more bad code, faster, and confidently. Great engineers, especially the ones with the traits below, become dramatically more effective.

So I'm hoping to convince you there are better signals and traits that characterize AI-native people more clearly than token counts. These traits may not be as quantifiable as tokens used, but they are strong tells, whether you're looking to improve or hire well.

1. Effective rationers of tokens

I love asking people about their token ratios because it tells me a lot about how intentional they are with them.

What percentage of tokens go to context gathering, planning, and implementation? How many go to second opinions, stress tests, verification, or postmortems? A lot of newbies spend most of their tokens simply generating the final output, which is often the least interesting part and the most prone to things going wrong if not careful.

AI-native people are more deliberate. They spend tokens to explore when uncertainty is high, narrow when it's time to decide, use multiple models to get counter viewpoints, and stop when the next token is just producing more slop instead of getting pulled into infinite "fix this!" loops.

2. Adversarial verifiers

When an LLM can verify its own work, it can correct itself and keep iterating on a problem until it gets it right. So in many cases, the bottleneck isn't model capability anymore, it's how well you can define what "right" or "good" is for your problem.

This is clearer in some domains (code = tests pass) than others (writing = qualitative judgment). AI-native people are good at turning vague problems into verifiable ones. For example, when creating a customer support bot, a newbie may define a generic "helpfulness" score and ask an LLM to rate every response from 1-5. An AI-native person looks at real conversations and defines more precise checks: did the bot escalate angry customers, cite the right business rule, avoid making up refunds, and resolve the issue without a second contact? The difference is moving from vibes-based evaluation to specific failure modes the system can grade more clearly, so it can improve more productively.

AI-native people are also adversarial verifiers: counterintuitively skeptical of AI, not because they use it less, but because they've used it so much in their domain. They know its jagged edges: where it excels, where it breaks down, and where it can sound convincing while being wrong. So they ask it to find flaws, surface hidden assumptions, test edge cases, and (ideally) decouple the implementer from the verifier, using one model to critique the work of another. Their skepticism is what lets them use AI more safely and more aggressively.

3. They care about other people's LLMs

AI-native people send context their coworkers can immediately hand to their LLMs. Usually that means prompts with all the important context attached or referenced. Their codebases have an llms.txt, and their docs or tools have a nice MCP.

Half our job now is facilitating communication between LLMs with varying contexts anyway. Why not lean into it?

4. Superior indexers of information

Tokens may be getting cheaper/faster, but your cognitive ability to process each token is not. Sifting through noise is more important now than ever.

Throwing more tokens at a problem, using more reasoning tokens, spending more LLM compute on planning, and so on, often works well. But it also creates far more information to process. AI-native people do this wonderfully well.

Some of them are big brained and inherently good at it. Most, though, have built strong systems. Some use local memory systems. Some use LLMs to manage other LLMs. Some are simply disciplined about naming, summarizing, and storing context for future retrieval.

This also makes AI-native people progressive learners. They don't learn every system from the bottom up before touching it, but they also don't use AI as an excuse to stay ignorant. They learn in layers: enough to move, enough to know what assumptions they're making, and enough to recognize when an abstraction is starting to leak. A shallow understanding may be fine for a demo, but not when changing a payments flow, permissions model, or shared component other teams depend on. AI-native people use AI to build the map, then go deeper where the risk is highest. And when they do, they codify what they learn into tests, evals, or guardrails so the important parts are easier to retrieve, verify, and reuse later for their LLMs.

5. Moulders of raw material

Working on a new project with AI is less start-from-scratchy and more like moulding clay.

The first output is not always the thing you actually want, even when you've spent hours aligning or planning (though it certainly helps). Initial generations are raw material. AI-native people are good at taking that material and shaping it: cutting parts, combining parts, asking for variants (like show variations of how a button could look in an html file), rejecting the obvious version, preserving the one strange bit that is actually good, and then pushing from there.

This is a different muscle than pushing through writer's block. You are not waiting for inspiration, and you are not treating the model's first answer as the answer. You are creating something to react to. Each reaction sharpens your taste, exposes a new option, or reveals what you actually meant.

Crucially, AI-native people use this to increase their decision space, not shrink it. Beginners often let the model choose for them without realizing it. AI-native people use the model to generate more points of judgment: more directions, more contrasts, and more tradeoffs. Instead of prompting "create a settings page for the app", they ask for 5 variations of what the page could look like, and ask what configurations could be exposed on the page. The point is not to avoid making decisions. It is to create better decisions to make.

6. Feedback loop pilled

AI-native people think in feedback loops. They base important changes to their product (like prompt or architecture changes) on feedback and build a pipeline that makes that easy. They have a system to identify patterns among bad sessions (some refer to these as "failure modes") that then translate to code fixes and enhancements.

When they don't have real customers using their product, they simulate customers using LLMs. Remember, as LLMs improve, so does LLM-as-<persona>, where that persona can be a tester, user, judge, etc.

7. Masters of alignment

Before LLMs, implementation was expensive and time-consuming, so alignment often happened through natural checkpoints: kickoff meetings, design reviews, code review, and all the conversations in between. The work moved slowly enough that teams had time to notice when they were building the wrong thing.

Now implementation is cheap enough that many people skip those checkpoints altogether. An issue can become a working PR in minutes. But skipping alignment does not remove the need for it; it just moves the cost later. You get features nobody asked for, agents touching the same files in conflicting ways, and teams producing more output without producing more impact.

AI-native people understand that alignment has to become a shared input to the work, not a late review of the output. The plan, open questions, and decision history need to be visible to the team (humans) and usable by the agents.

Enjoyed this post?

Subscribe for more AI Engineering posts.